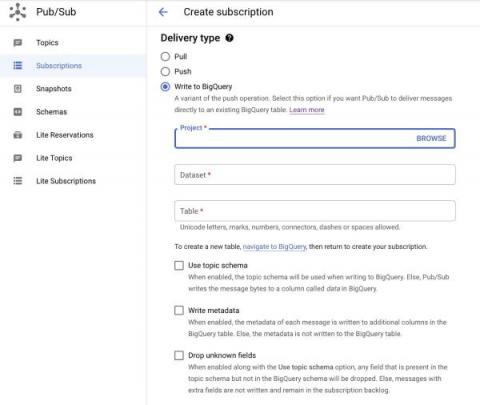

No pipelines needed. Stream data with Pub/Sub direct to BigQuery

Pub/Sub’s ingestion of data into BigQuery can be critical to making your latest business data immediately available for analysis. Until today, you had to create intermediate Dataflow jobs before your data could be ingested into BigQuery with the proper schema. While Dataflow pipelines (including ones built with Dataflow Templates) get the job done well, sometimes they can be more than what is needed for use cases that simply require raw data with no transformation to be exported to BigQuery.