Systems | Development | Analytics | API | Testing

API

Rest Testing Made Easy with SoapUI Pro

Kong Brain and Kong Immunity Released!

Four months ago, we declared that API Management is dead and announced our vision for a service control platform. Today, we’re taking a critical step towards fulfilling that vision with the launch of artificial intelligence and machine learning additions to the Kong Enterprise platform – Kong Brain and Kong Immunity.

Our Top API Articles of 2018

Apigee experts published over 50 editorials in 2018 — including dozens here in APIs and Digital Transformation — to help developers, IT architects, and business leaders understand how to maximize the value of APIs and keep pace with constant technological change.

A Toast to an Awesome Year

2018 was an AMAZING year for Google Cloud’s Apigee team. It was, in fact, another “best year ever.” We’re deeply grateful to the companies who use Apigee to accelerate their businesses with APIs.

A Tour of Kong's Routing Capabilities

Kong is very easy to get up and running: start an instance, configure a service, configure a route pointing to the service, and off it goes routing requests, applying any plugins you enable along the way. But Kong can do a lot more than connecting clients to services via routes.

Steps to Deploying Kong as a Service Mesh

In a previous post, we explained how the team at Kong thinks of the term “service mesh.” In this post, we’ll start digging into the workings of Kong deployed as a mesh. We’ll talk about a hypothetical example of the smallest possible deployment of a mesh, with two services talking to each other via two Kong instances – one local to each service.

Creating Your First Database API with DreamFactory

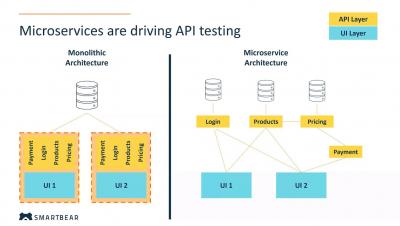

Microservices and Service Mesh

The service mesh deployment architecture is quickly gaining popularity in the industry. In the strategy, remote procedure calls (RPCs) from one service to another inside of your infrastructure pass through two proxies, one co-located with the originating service, and one at the destination. The local proxy is able to perform a load-balancing role and make decisions about which remote service instance to communicate with, while the remote proxy is able to vet incoming traffic.