Systems | Development | Analytics | API | Testing

Google BigQuery

Geotab - Data Management Platform with GCP Technology

What's happening in BigQuery: a new ingest format, data type updates, ML, and query scheduling

This month we released several new features in beta, including query scheduling, new BigQuery ML models and functions, and geospatial types and queries. We also released the ORC ingest format into GA. Let’s take a closer look at these features and what they might mean for you.

BigQuery arrives in the London region, with more regions to come

BigQuery, Google Cloud’s serverless, highly scalable, low-cost, enterprise data warehouse, was designed to make data analysts productive. With no infrastructure to manage, customers can focus on analyzing data using familiar Standard SQL, while simplifying database administration and data operations. Large enterprises, mid-market growing organizations, and cloud native startups across the globe can use BigQuery to perform analytics at scale with equal ease.

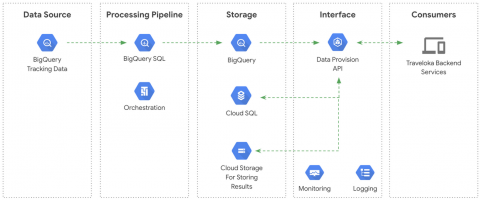

How Traveloka built a Data Provisioning API on a BigQuery-based microservice architecture

To build and develop an advanced data ecosystem is the dream of any data team, yet that often means understanding how the business will need to store and process that data. As Traveloka’s data engineers, one of our most important obligations is to custom-tailor our data delivery tools for each individual team in our company, so that the business can benefit from the data it generates.

Analyzing Geospatial Data with BigQuery GIS - Take5

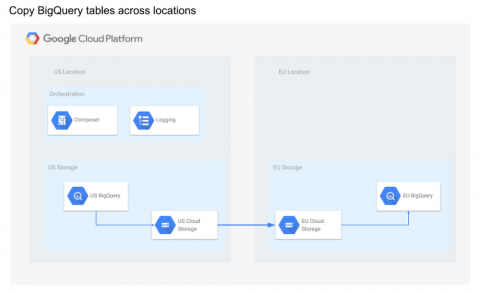

How to transfer BigQuery tables between locations with Cloud Composer

BigQuery is a fast, highly scalable, cost-effective, and fully-managed enterprise data warehouse for analytics at any scale. As BigQuery has grown in popularity, one question that often arises is how to copy tables across locations in an efficient and scalable manner. BigQuery has some limitations for data management, one being that the destination dataset must reside in the same location as the source dataset that contains the table being copied.

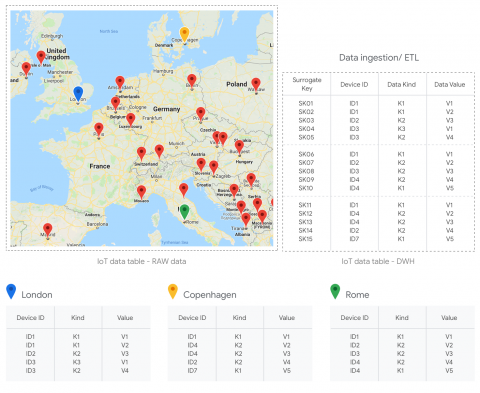

BigQuery and surrogate keys: a practical approach

When working with tables in data warehouse environments, it is fairly common to come across a situation in which you need to generate surrogate keys. A surrogate key is a system-generated identifier that uniquely identifies a record within a table. Why do we need to use surrogate keys? Quite simply: contrary to natural keys, they persist over time (i.e. they are not tied to any business meaning) and they allow for unlimited values.

Using BigQuery with C#

Ethereum in BigQuery: how we built this dataset

In this blog post, we’ll share more on how we built the BigQuery Ethereum Public Dataset that contains the Ethereum blockchain data. This includes the primary data structures—blocks, transactions—as well as high-value data derivatives—token transfers, smart contract method descriptions.