Systems | Development | Analytics | API | Testing

Comparing Cypress and Playwright: Pros and Cons

It is necessary to test web apps and ensure that they perform according to user requirements in order to provide a high-end user experience. There are several tools and frameworks available on the market for testing online applications, including Playwright, Cypress, and Selenium to mention a few.

When Should you Consider Metered Billing

Have you ever paid a bill for electricity, internet, or water? Was that bill based upon the amount of resources you used?

What Is a Data Pipeline and Why Your Ecommerce Business Needs One

Kafka best practices: Monitoring and optimizing the performance of Kafka applications

Apache Kafka is an open-source distributed event streaming platform used by thousands of companies for high-performance data pipelines, streaming analytics, data integration, and mission-critical applications. Administrators, developers, and data engineers who use Kafka clusters struggle to understand what is happening in their Kafka implementations.

Our top 5 takeaways from the mobile team at Vestiaire Collective

What are the best practices of the mobile team behind one of the world’s leading buy-and-sell platforms for improving productivity and the developer experience? Read on for the five main learnings from our recent customer story.

8 Essential Ecommerce Google Analytics Dashboards Recommended by Ecommerce Experts

CPU Profiling in N|Solid [3/10] The best APM for Node, layer by layer

Review your applications in detail with CPU Profiles in N|Solid and find opportunities for Improve code. You can use the CPU Profiler tool in N|Solid to see which processes consume the most percentage of CPU time. This functionality can give you an accurate view of how your application is running and where it is taking up the most resources.

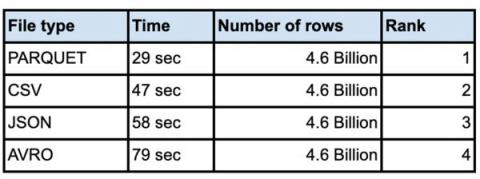

Performance considerations for loading data into BigQuery

It is not unusual for customers to load very large data sets into their enterprise data warehouse. Whether you are doing an initial data ingestion with hundreds of TB of data or incrementally loading from your systems of record, performance of bulk inserts is key to quicker insights from the data. The most common architecture for batch data loads uses Google Cloud Storage(Object storage) as the staging area for all bulk loads.

A Two-Pronged Approach to Improving Government Case Management Work

Government organizations have a bad rap for being inefficient. But with outdated technology and limited spending, they aren’t exactly set up for success. And the expectations from stakeholders are high, with funding provided primarily by taxpayer dollars.