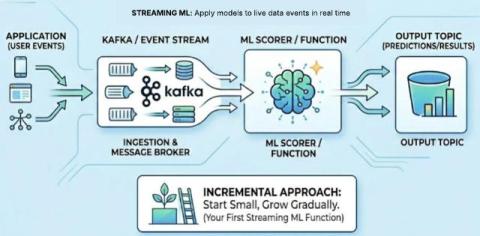

How to Implement Your First ML Function in Streaming

The most effective way to adopt streaming machine learning (ML) is not by rebuilding your entire platform but by adding a single, high-value inference step to your existing data flow. This incremental approach allows you to transition from batch-based processing to real-time decision-making without the risk of a "big bang" migration, ensuring that your microservices architecture remains agile and responsive. What Is Streaming ML? ML in streaming is the practice of.