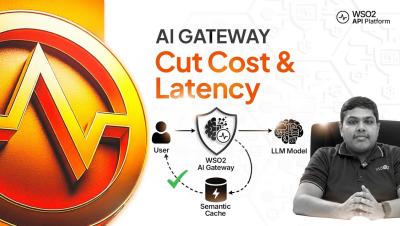

WSO2 AI Gateway: Prompt Management & Semantic Caching

Learn how to ensure consistent AI interactions and drastically reduce latency using the WSO2 AI Gateway. This step-by-step tutorial demonstrates how to standardize your LLM requests for quality and efficiency while cutting down on redundant API costs. We explore "Prompt Management" to enforce organizational guidelines using templates and decorators, and "Semantic Caching" to leverage vector embeddings—serving instant, cached responses for semantically similar queries to minimize expensive LLM calls.