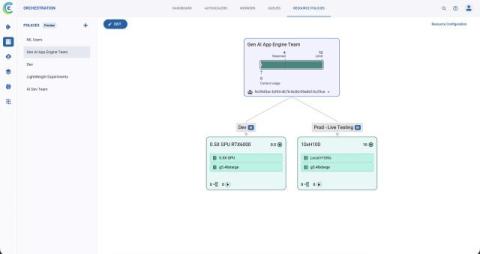

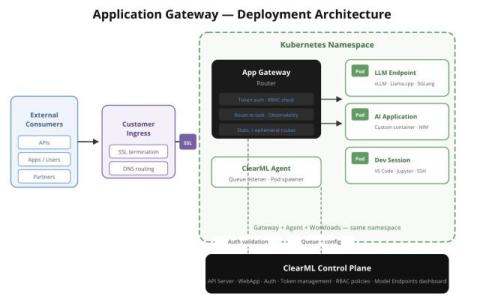

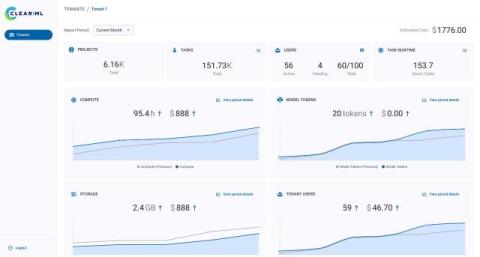

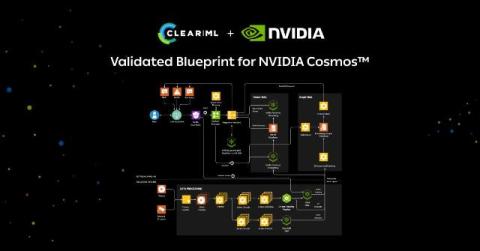

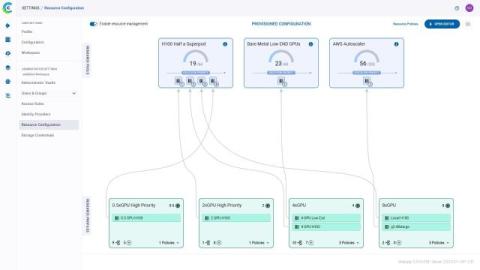

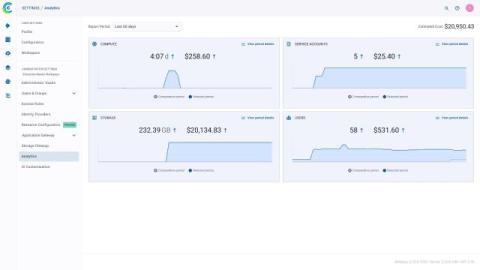

Compute Governance for AI Teams: Pools, Profiles, and Policies in ClearML

By Adam Wolf This blog covers how ClearML’s compute governance layer (resource pools, profiles, and policies) gives every team fair, prioritized access to shared infrastructure without leaving hardware idle. It accompanies our Enterprise AI Infrastructure Security YouTube series. Watch the corresponding video below.