Is MindsDB Safe for Enterprise Use? Security Risks and Alternatives

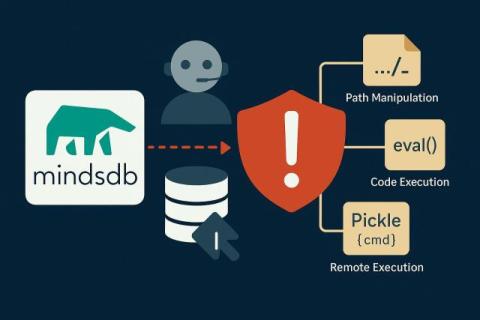

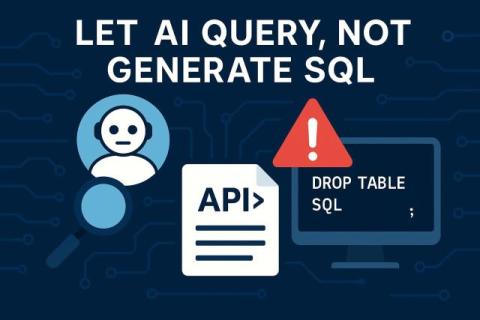

MindsDB has gained attention for its promise to act as a “SQL server for AI”, enabling users to write natural language prompts that convert into executable database queries. While this may appeal to data scientists and AI teams, enterprise CISOs and compliance leaders should proceed with caution. Recent disclosures have revealed critical security vulnerabilities in MindsDB’s platform that raise serious questions about its suitability for sensitive or regulated environments.