Connecting the Dots: Simplifying Multi-API Data Flows into Apache Kafka

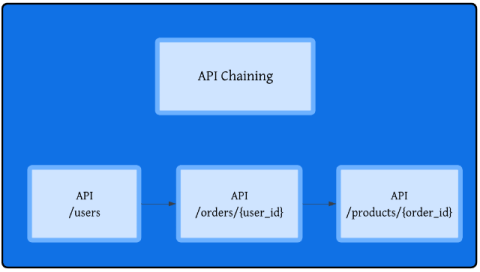

In today’s data-driven software-as-a-service (SaaS) environments, the need for complete customer insights often requires fetching and sharing data that lives across multiple API endpoints. That’s why many of our customers want to use Confluent’s data streaming and integration capabilities to implement real-time API chaining—a technique that allows them to automatically follow relationships between APIs.